Author: “You should keep your dependencies up to date”

Reader: “No shit, tell me something I don’t know :)“

However, most teams I’ve worked in fall behind on maintenance over time.

Why?

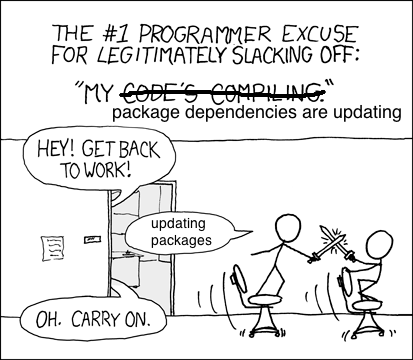

Because keeping dependencies up-to-date takes time and effort. In an environment when shipping is the primary objective, maintenance takes a back seat. Sure, when the effects of outdated dependencies are apparent (something is breaking, or development is slowed down significantly), it’s easy for anyone in the organization to say “we should stay up-to-date with these things!”.

A couple of examples when:

- The new major version of Ruby on Rails has been released and six months later, your other dependencies stop releasing updates for your old major version.

- The new shiny version of Webpack 2 (or 3) hit the streets, and all your loaders all of a sudden stop supporting Webpack 1.

You don’t necessarily need to upgrade, but the day you do (security issues perhaps), it’s going to take you way longer to do the migrations. Compared to if you had done it regularly. The challenge is to be proactive enough that the team isn’t put in those situations.

With that in mind, what are the actions we as developers can take that ensures that the outcome never becomes a production halt? For us, the main action is Engineering Maintenance Day (EMD).

EMD occurs one day a month, per project, where we take the time to update dependencies, do risk assessment and look at our current technical debt.

We do it because we don’t want to fall behind on our dependencies and software as the pain of getting back on track has a tendency to accumulate quite heavily.

Software development these days is moving at a rapid speed and the more code you touch, the more likely you are to introduce bugs, even if your intent is to improve it. That means increased development time, testing, and therefore cost ($$$). And if it slips through all the way to a release with a medium to high-defect, everyone need to foot a huge bill.

On top of that, one of the larger macro-trends over the past decade, is that the use of third-party dependencies has exploded. For example, the open-source-JavaScript community right now is just the largest, most active open source community that has ever existed.

Check out the stats that GitHub announced last year: https://octoverse.github.com/

According to the statistics published by GitHub, open source JavaScript activity as measured by pull requests has doubled in the past two years. It’s more than the next two languages (Java and Python) combined.

“Open source JavaScript activity as measured by pull requests has doubled in the past two years”

Given we are heavily dependent on JavaScript at Sparta — we decided to implement something called Engineering Maintenance Day in order to ensure we minimise defects that are shipped to production.

We have three different products within our team, that each require a variety of tasks during an EMD.

API (Ruby, Ruby on Rails)

- Heroku (Ruby version) — Are there any new major or minor releases that we could benefit from?

- Database (Postgres) — Same as above (we recently updated to 9.5+ because we needed JSONB as a column type)

- Gems — Go through the changelog’s on a regular basis. Use a lockfile. One of the tools you can take a look at is Gemnasium which helps automate this

Web (Node/Express)

- Heroku (Node) — Are there any new major or minor releases that we could benefit from?

- NPM packages — Go through the changelog’s of the updated dependencies. Lock your versions with a yarn lockfile or npm-shrinkwrap. Take a look at Greenkeeper.io which helps you automate this.

Mobile (Swift)

- Cocoapods — Are there any new major or minor releases that we could benefit from?

Verification during an EMD

This is one of the times where proper test suites really shine, since we can update dependencies and quickly find any issues.

- Unit tests

- End to end tests

Forks

There will always be reasons for a fork to exist. In a larger organisation it will be hard to keep track of the different reasons. As always, it’s good to maintain a documented list of these in case the maintainer at your company decides to move on.

Example:

(Dependency name). Reason for fork: Forked because we decided to expose a private attribute. Pull request upstream has been submitted at 12/2 2017 by <Name>

We make sure to always put the forks in our own organisation’s namespace on GitHub. That way we as an organisation maintain control of them.

Locked versions

There might be reasons that some dependencies have been locked to a specific version. Perhaps it involves a larger refactoring because it exposes a new API. Either way, these needs to be documented somewhere.

If your dependency file (Gemfile, package.json, Podfile) supports comments, that would be a good place. If not, you should put it in a shared wiki of some sort.

Risk assessment

Even if we do EMD on a regular basis, we can not avoid that some work are just too big to fit into one or two days. This is why we keep a separate document where we list our risks and/or technical debt that we need to deal with at some point.

Its organised in a way that lists “Risk for <X>, because <Y>, which means that <Z>. Therefore we need to <A>”

Example:

“Risk for timeouts to occur, because the data layer (SQL queries and relations) is not optimized for the size of customers we are taking on, which means that scaling to bigger accounts will be harder. Therefore we need to spend some time on optimizing these parts”

These items can then be reviewed perhaps on a bimonthly schedule where you go through them and allocate proper time and resources to the bigger maintenance projects.

This blog post was written together with my colleague Jonas Martinsson at Sparta. Shoutout to Erik Hedberg for technical proofreading.